Key Takeaways

- Global electricity demand from data centers is projected to exceed 945 TWh by 2030, largely due to AI.

- AI data centers in the US are expected to consume about 8% of the nation’s electricity, up from 3% in 2022.

- VSORA’s Jotunn 8 chip is designed for efficient AI inferencing, offering 3.2 petaflops of processing power and reduced power consumption.

The International Energy Agency (IEA) has forecasted that global electricity consumption from data centers will more than double, reaching approximately 945 terawatt-hours (TWh) by 2030. This surge is driven primarily by AI-optimized data centers. A separate Deloitte report anticipates that AI-related data center power usage could hit around 90 TWh by 2026, which would represent roughly 14% of total data center consumption, projected at 681 TWh. In the United States, data centers are expected to consume about 8% of the national electricity supply by 2030, an increase from 3% in 2022. Current utility delays for data center grid connections can extend up to seven years, raising concerns about the electric grid’s capacity to meet this growing demand.

Google CEO Sundar Pichai indicated that new data centers may require over 1 gigawatt of power, comparable to a large nuclear reactor’s output. However, the demand for AI is not expected to decline; Gartner estimates a compound annual growth rate (CAGR) of 40-50% in the requirement for AI inferencing compute through 2027. Additionally, the International Data Corporation (IDC) predicts that by 2028, 80-90% of AI compute in the cloud will focus on inference, as trained models are deployed and queried endlessly.

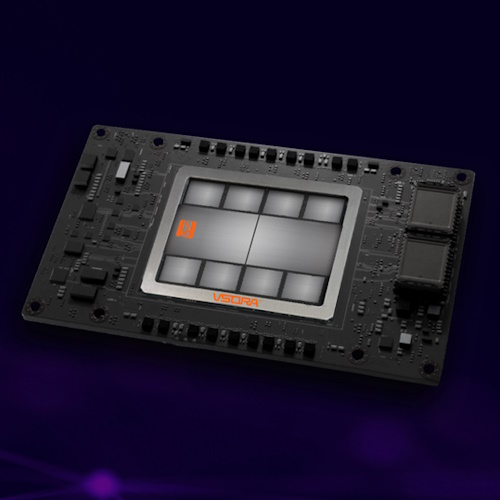

The article highlights VSORA, a French semiconductor company that specializes in ultra-efficient chips for real-time AI inference. Founded in 2015, VSORA transitioned from an intellectual property provider into a company focused on semiconductor manufacturing. Their flagship product, the Jotunn 8, is built using TSMC’s 5nm technology and delivers an astounding 3.2 petaflops of processing power with high efficiency. This chip contains eight chiplets and high-bandwidth memory, making it highly effective for AI applications.

Each VSORA chiplet incorporates two AI cores and five RISC-V processors, allowing complex algorithms to be executed directly on the chiplets without overloading the host processor. This design is rooted in their original focus on automotive technology and emphasizes functional safety and performance efficiency.

The Jotunn 8 supports various numerical formats and data bandwidth, showcasing remarkable flexibility. The architecture allows for 256 million registers across the chip, greatly enhancing data accessibility and processing speed. Traditional architectures often waste cycles preparing data, whereas VSORA’s design merges memory components into one unit, drastically improving efficiency.

According to Jon Peddie Research, an emerging report indicates a burgeoning AI processor market, predicting a significant consolidation of companies and potential growth akin to past technology booms. Currently, 121 companies are tracked in this space, with expectations that this number will shrink to about 25 key players by 2030.

As advancements in AI and computing continue, VSORA is positioned as a strong contender to survive the upcoming consolidation, given its innovative technology and efficiency-focused designs. The swift evolution in the industry reflects the exciting and transformative landscape of AI and data centers, suggesting a promising future for companies that effectively leverage these developments.

The content above is a summary. For more details, see the source article.